This is the sequel to Shape injectivity of the earring space (Part I)

We’re on our way to proving the canonical homomorphism from the earring group to the inverse limit of free groups is injective. Part I was mostly dedicated to proving that an inverse limit of trees contains no simple closed curve. I’d like to reiterate here that the proof I’m detailing is almost completely self-contained; we’ll need to review two classical results from Continuum Theory; both are proved in Sam Nadler’s very readable book [2].

As a bonus, I hope you’ll find, in this two-part post, another excellent example of why general mathematical theory is worth developing. Our proof uses several existence/structure theorems that, by themselves, don’t tell you how to do anything practical. However, they can be used together to prove the Shape Injectivity Theorem, which does provide something concrete: a practical way to study and do calculations in the most fundamental class of groups with non-commutative infinite products.

Peano Continua and Dendrites

Definition: A Peano continuum is a connected, locally path-connected, compact metrizable space.

The following theorem is an important characterization of Peano continua (See [Nadler, 8.18]) that every topologist should keep in their back pocket.

Hahn-Mazurkiewicz Theorem: A space is a Peano continuum if and only if it is Hausdorff and there is a continuous surjection

.

Definition: A dendrite is a Peano continuum containing no simple closed curve.

Based on an early lemma from Part I, we could define a dendrite to be a uniquely arc-wise connected Peano continuum. Intuitively, a dendrite is a one-dimensional Peano continuum without any holes.

First, consider the “arc hedgehog” space which is a one-point union of a shrinking sequence of arcs of length

. This space came up in an early post about the category of locally path-connected spaces. It’s easy to see that

is uniquely arc-wise connected and is therefore a dendrite.

How complicated can a dendrite be? Start with , which has a single branch point, meaning that if we delete it, the subspace left has at least 3 components. At the midpoint

of a segment of length

, attach a copy of

scaled to have diameter

. Continue the process inductively in a dense pattern to construct the following dendrite called Wazewski’s Universal Dendrite.

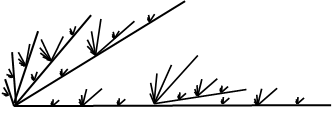

Wazewski’s Universal Dendrite

Notice there are no open sets in the Universal Dendrite homeomorphic to an open interval. In fact, this dendrite contains a homeomorphic copy of every dendrite as a retract. Hence, as far as dendrites go, the universal dendrite is as complicated as a dendrite can be.

The second result we’ll need from continuum theory provides us with a convenient way of writing down a dendrite as an inverse limit.

Dendrite Structure Theorem [Nadler, 10.27]: Every dendrite is homeomorphic to the inverse limit of a sequence of tree’s where

,

is an arc, and the bonding retractions

collapse the arc

to the attachment point

.

This structure theorem just tells you that some inverse system exists, it doesn’t help you find one. It’s a good exercise to figure out how you’d actually realize some dendrites as inverse limits of trees in the specified fashion. I recommend working out the arc-hedgehog space and Wazewski’s Universal Dendrite as examples. Hint: create an inverse system by enumerating the arcs by size.

We’ll use the Dendrite Structure Theorem to prove the last technical ingredient we’ll need. It appears as Exercise 10.51 in [2] and, I believe, is usually attributed to Borsuk.

Theorem: Dendrites are contractible.

Originally, I had posted an inverse-limit proof that uses the Dendrite Structure Theorem here that was not correct. At some point, I’ll come back and post a corrected proof using inverse limits. There is also a way to prove dendrites are contractible using metrization theory. Every dendrite admits a metric

such that for all distinct

, there is an isometric embedding

onto the unique arc with endpoints

and

(this is called an

-tree metric). We fix

and define contraction

so that

. Then $H(x,1)=x$, $H(x,0)=v$ and one can show without too much difficulty that

is continuous.

Proof of the Shape Injectivity Theorem

Finally, we get to the point. Ok, remember the homomorphism ,

from the first post? To show it’s injective, we’ll show

is trivial. Suppose that

is a loop based at

such that

is null-homotopic for every

. We must show that

is null-homotopic in

.

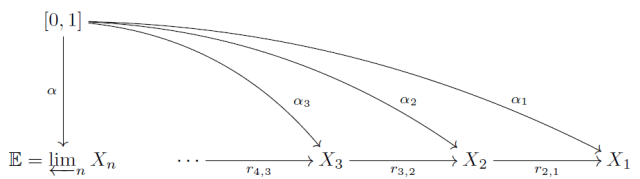

Each space is a wedge of circles and therefore has a universal covering space

, which is an infinite tree. In particular,

is the Caley graph of

. Let

be the universal covering map.

After making a choice of vertex basepoints , notice that the map

from the simply connected covering space has a unique lift

such that

.

This gives us an inverse system of based covering maps.

Consider the inverse limit . There are two things to be wary of: 1. the inverse limit of path-connected spaces is not always path-connected and 2. and an inverse limit of covering maps is not usually a covering map. But these general failures are not a deal-breaker in our situation.

First, pick a path component of . Specifically, take

to be the path component containing the basepoint

. Let

be the restriction of

.

While the map is not a traditional covering map, it is surjective and it enjoys all of the usual lifting properties of a covering map. It is a kind of “generalized covering map.” Also, the space

is not a dendrite. In fact, with the subspace topology inherited from the inverse limit, it’s not even locally path connected. However, if you apply the locally path-connected coreflection to it, the result is a true generalized universal covering in the sense of [1]. For our purposes, we only need to recognize that

is sitting inside an inverse limit of trees.

Lemma: is uniquely arc-wise connected. In particular, it contains no simple closed curves.

Proof. The main theorem proved in Part I is that the limit of an inverse system of trees contains no simple closed curves. Since each covering space is a tree, this theorem applies and we conclude that

contains no simple closed curve. In particular, the path component

contains no simple closed curve.

Recall that is a loop based at

such that, for all

, the projection loop

onto the wedge of n-circles is null-homotopic in

. We are looking to build a null-homotopy of

from a choice of null-homotopies of the approximating loops

despite the fact that these null-homotopies might seem completely unrelated.

Let be the unique lift of

starting at

, i.e. so that

. Since

is null-homotopic in

, it must be the case that each

is actually a loop based at

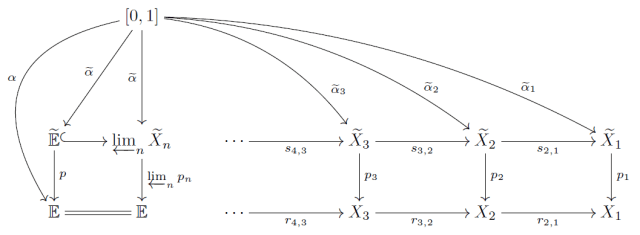

. The diagram below shows the following equalities hold:

Since preserves the basepoints (by construction) and

is the unique lift of

starting at

, the equality above tells us that

. This means the lifted loops

agree with the bonding maps of the covering space inverse system. The universal property of the top inverse system hands us a unique loop

based at

satisfying

.

Now is the continuous image of

in a Hausdorff space so, by the Hahn-Mazurkiewicz Theorem,

is a Peano continuum. Moreover, since

is path connected and contains

, we have

. Since

contains no simple closed curves, neither does

Therefore,

is a dendrite. Finally, we apply the theorem (from earlier in this post) all dendrites are contractible. Since

factors through a contractible space, it is null-homotopic in

. We conclude that

is null-homotopic in

. This completes the proof that

is trivial.

Concluding Thoughts

Where did the null-homotopy of come from? It would have required a super-technical effort to build an explicit homotopy so we passed the hard work off to Borsuk’s Theorem that dendrites are contractible. Using Part I and the Hahn-Mazurkiewicz Theorem, we could say that the space

is a dendrite in the first place. Then follow any proof of the contractibility of dendrites to finish the job.

Notice that we basically didn’t use anything specific about the earring space except that the universal covers of the approximating spaces are trees! So we could replace the earring with any inverse limit of graphs and the same proof would go through. So actually, we proved:

One-Dimensional Shape Injectivity Theorem: If is an inverse limit of based graphs

, then the canonical induced homomorphism

to the inverse limit of free groups is injective.

For example, any one-dimensional Peano continuum (including the Menger Curve and Sierpinski Carpet) can be written as the inverse limit of finite graphs and falls within the scope of this useful theorem.

If you feel comfortable with the end of the proof, then you can also prove as a quick exercise that every inverse limit of graphs is aspherical, i.e. has trivial higher homotopy groups!

References.

[1] H. Fischer and A. Zastrow, Generalized universal covering spaces and the shape group, Fund. Math. 197 (2007) 167-196.

[2] S. Nadler, Continuum Theory: An Introduction, Chapman & Hall/CRC Pure and Applied Mathematics. 1992.